Invicti Finds More Critical Vulnerabilities Than Competitors

In a competitive analysis against Snyk, Stackhawk, and Tenable, Invicti detected the most critical vulnerabilities while delivering balanced scan performance and consistent coverage.

Key Findings

Test Overview

This evaluation measured how well leading Dynamic Application Security Testing (DAST) tools identify real vulnerabilities in running applications. The evaluation focused on vulnerability discovery by severity, scan execution characteristics, and behavioral consistency across targets, rather than optimization for any single application type.

The test environment consisted of 11 intentionally vulnerable web applications and APIs that represent modern and legacy architectures. Invicti supplied Miercom researchers with a canonical list of vulnerabilities that had been coded into the applications. This gave them a benchmark of expected vulnerabilities present in each target, along with their severity and exploitability.

Miercom ran the scans independently and also developed their own intentionally vulnerable web applications for scanning. They ran the same testing across these internal apps to validate trends from this report and ensure Invicti’s results were not pre-engineered. Vendors are welcome to request access for independent validation or challenge testing. Miercom encourages continued engagement and re-evaluation to ensure results remain current as products evolve. Additionally, competitors are invited to request re-evaluation by Miercom if there is disagreement with the results presented in this report.

Measurements

Miercom measured total scan time, total vulnerabilities detected, and total number of critical, high, and medium severity findings.

Criteria

Tools were evaluated based on detection of known vulnerabilities in the application, accuracy of reported severity levels, coverage gaps where known vulnerabilities were missed, and number of excess findings (indicating duplicates or false positives).

Apps tested in the report

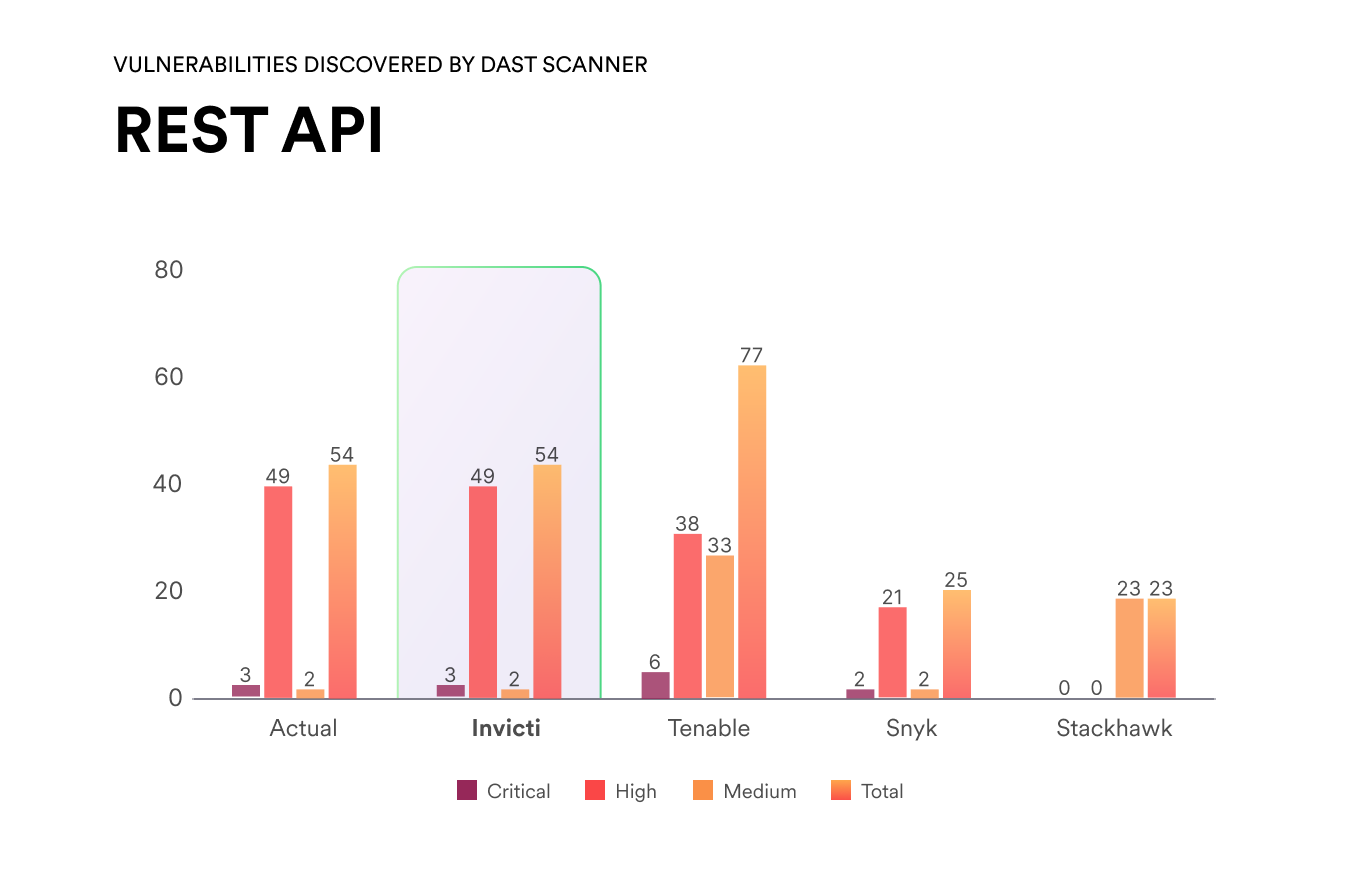

A production-style REST API intentionally seeded with common issues (injection, broken authentication, access control flaws) to measure how well scanners handle API-first back-end services with no browser UI.

An application exposing a GraphQL endpoint with a realistic schema, queries and mutations. Assesses GraphQL-specific attack patterns and introspection handling.

A classic ASP.NET web application running on the Windows/IIS stack, used to validate coverage of .NET-specific behaviors such as session management and framework-driven input handling.

A forum-style application with registration, login, user profiles, and threaded discussions, used to test scanners on authenticated, multi-step workflows and role-based access scenarios.

A PHP application with a reduced but varied attack surface, used to confirm scanner behavior on simpler targets and to compare against the Classic PHP application.

A web application built on a common Python web framework, representing modern non-Java/.NET stacks and allows the validation of language-specific behaviors and templating issues.

A single page application built with a contemporary JavaScript framework, consuming JSON APIs and using client-side routing and token-based authentication. Testing the scanners’ ability to crawl and exercise rich front-end applications.

A traditional server-rendered PHP site following older coding patterns (form posts, query-string parameters), representing legacy LAMP-style applications still common in many environments.

A simple micro-blog style application where users can post and interact with short messages, intended to exercise input validation, output encoding, and authentication/authorization paths.

A blogging application with content creation and commenting features, combining public and authenticated areas to evaluate crawling depth, state handling, and stored input issues.

A compact RESTful service focused on CRUD operations, used to test support for machine-readable API definitions and HTTP verb coverage across endpoints.

Results

Below, you'll see a breakdown of how many critical vulnerabilities vendors found across the different scans along with a selection of invidividual test graphs featured in the full Miercom report.

Critical Vulnerabilities Found by Target

This matrix shows how many known critical vulnerabilities each scanner identified across different application types. Each target contained intentionally injected vulnerabilities so that the researchers had a benchmark to compare performance (this is the "Actual" column).

Invicti was the only scanner to identify all critical vulnerabilities across every application type — a 100% detection rate. Competing tools frequently missed critical issues, particularly in modern architectures like APIs and GraphQL, highlighting significant gaps in real-world detection coverage.

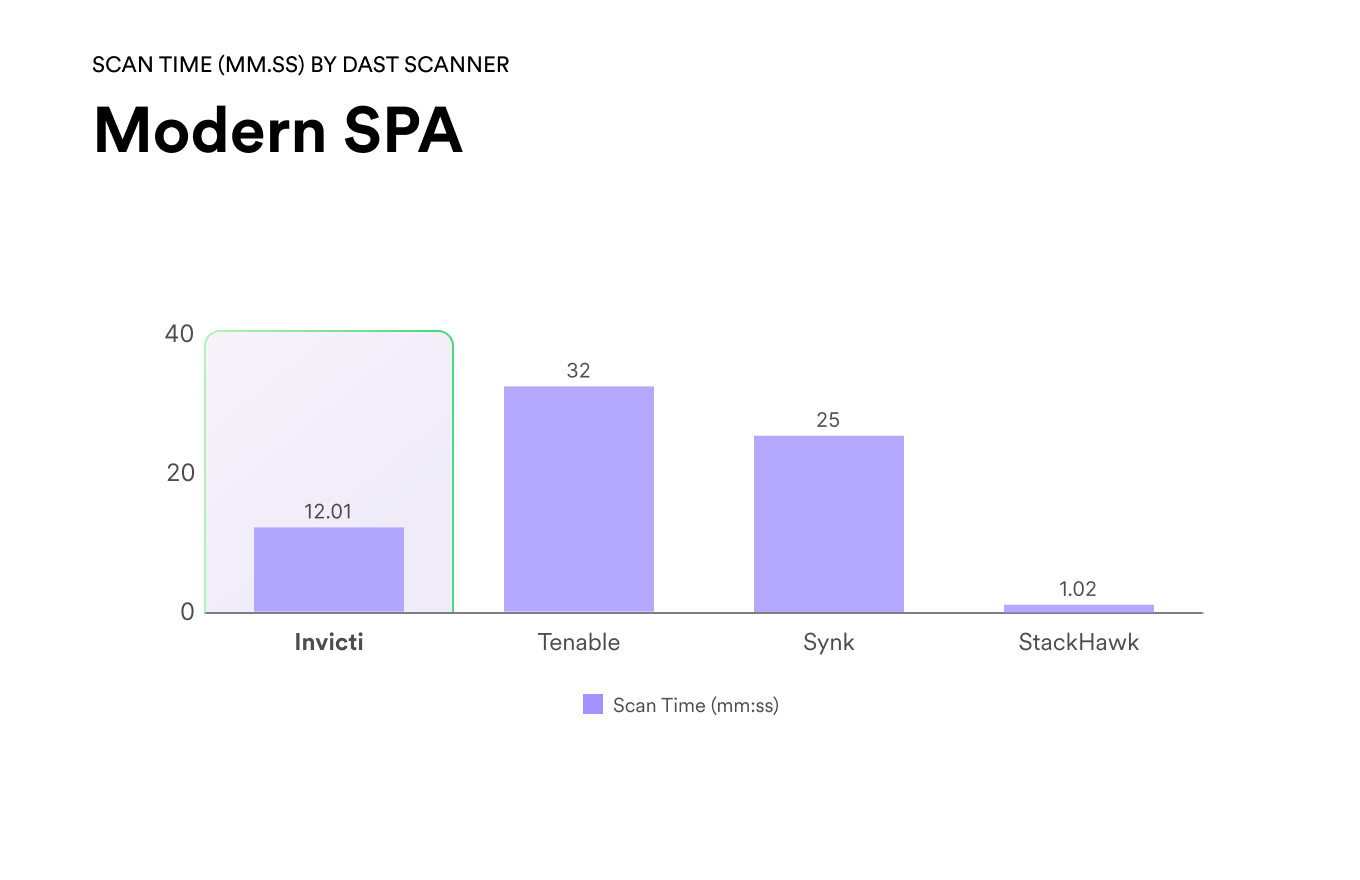

Scan Speed by Target

This matrix shows the time each scanner took to complete scans across different application types. Scan durations are presented in minutes for direct comparison.

Faster scan times did not correlate with better results. In multiple cases, the fastest scanners missed critical vulnerabilities entirely, while longer scan times did not consistently improve detection. Invicti maintained a balance between scan duration and depth, delivering complete coverage without excessive runtime.

Individual Tests Results

Invicti reported 54 total validated findings, including 3 critical, 49 high, and 2 medium severities, demonstrating strong coverage of high-impact issues. Tenable reported the highest total number of findings at 77, resulting in the largest overall finding volume. Snyk reported 25 total findings, consisting of 2 critical, 21 high, and 2 medium severity vulnerabilities. StackHawk reported 23 findings, without identifying any critical or high severity vulnerabilities.

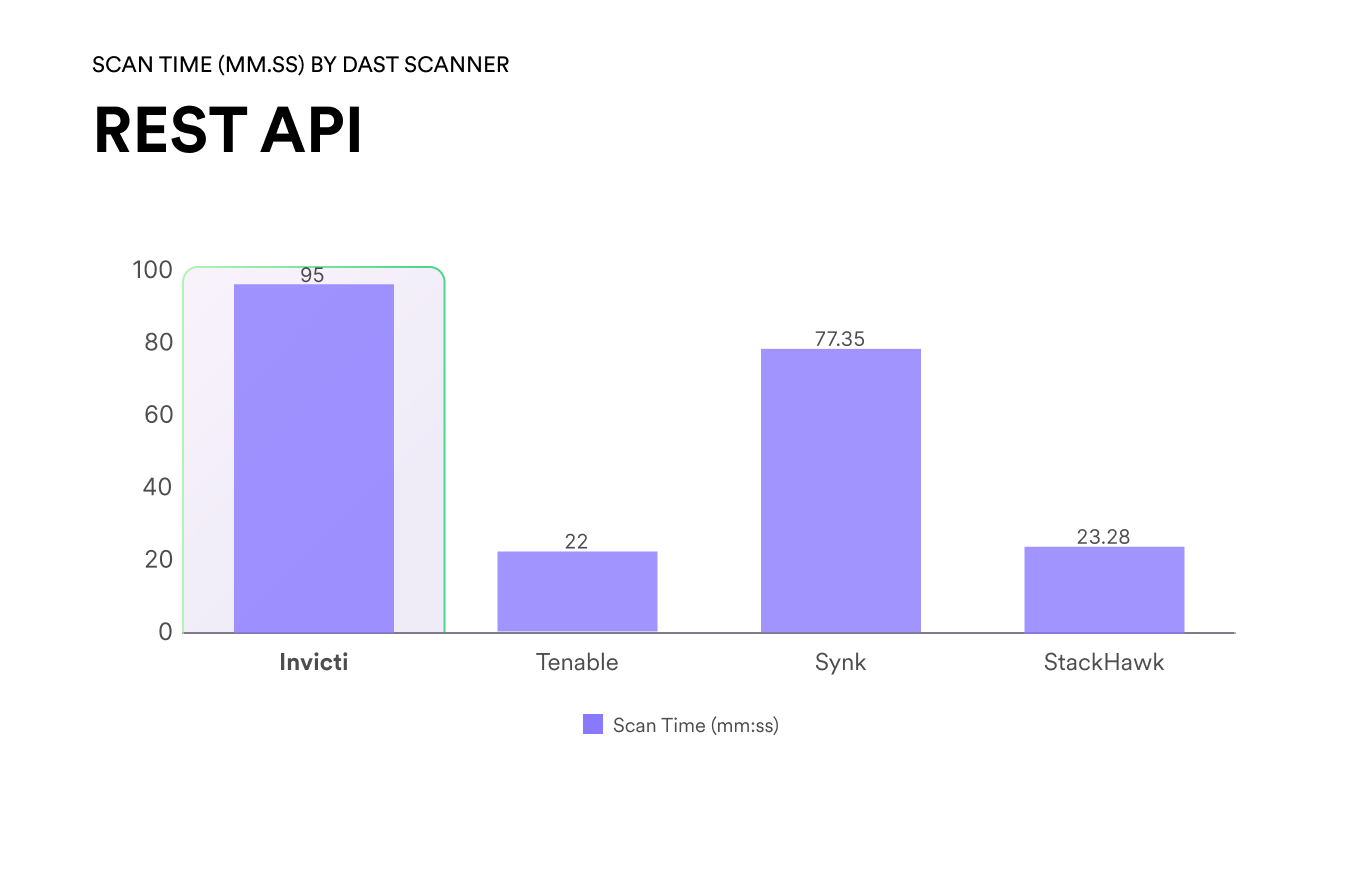

Scan duration varied significantly across products. Tenable completed scanning in 22:00, the shortest observed scan time. StackHawk completed scanning in 23:28. Snyk required 77:35 to complete scanning, while Invicti required the longest scan duration at 95:00 on the same REST API target.

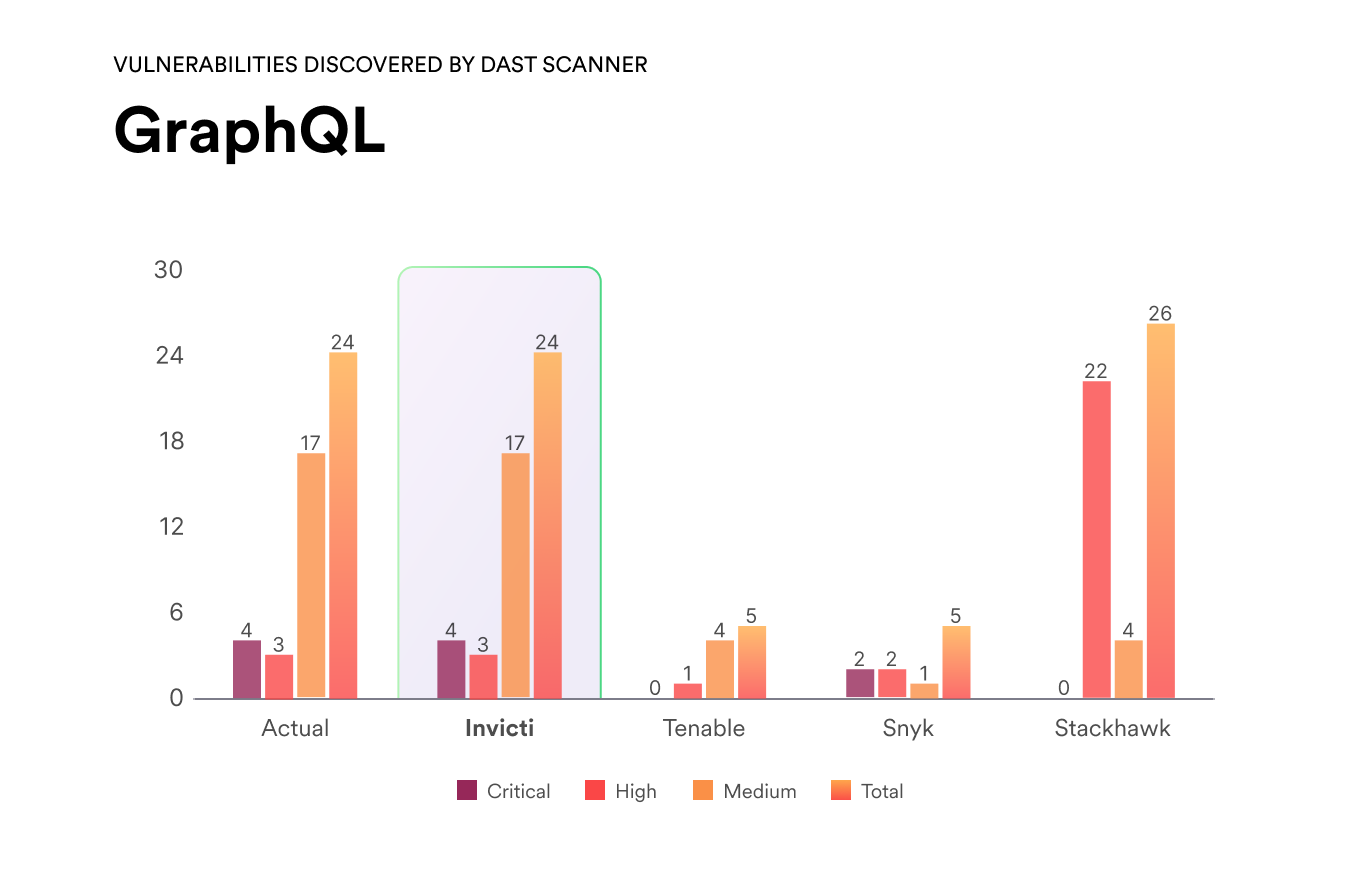

Invicti reported 24 validated findings, including 4 critical, 3 high, and 17 medium severity vulnerabilities, representing the most complete coverage across all severity levels. StackHawk reported 26 total findings, exceeding the validated count by 2 findings, driven primarily by 22 high severity issues. Tenable reported 5 total findings, while Snyk also reported 5 findings, both reflecting substantially lower overall coverage on the GraphQL target.

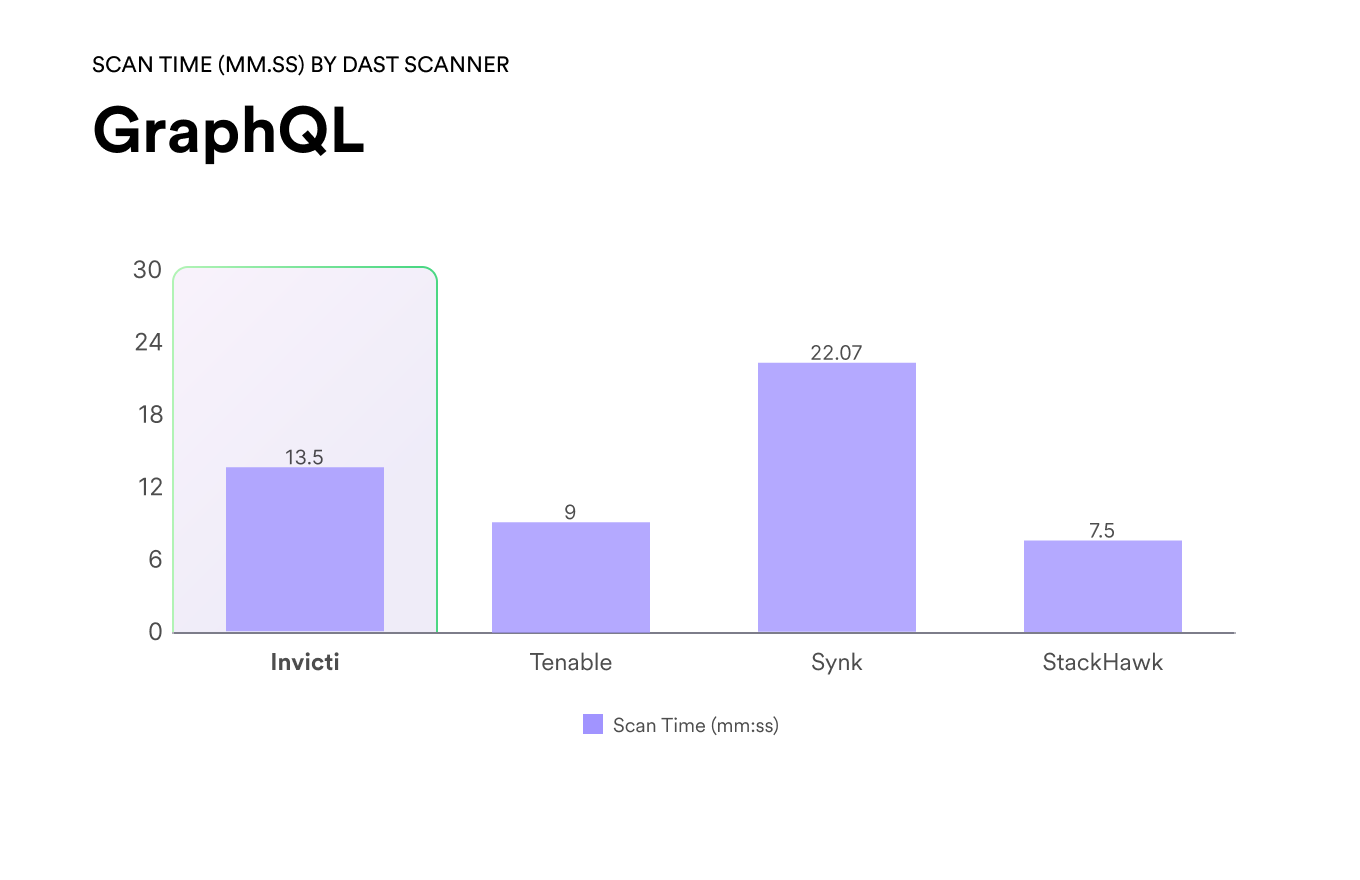

StackHawk completed scanning in 07:50, while Tenable completed scanning in 09:00 minutes. Invicti completed scanning in 13:50, balancing scan duration with depth of GraphQL-specific analysis. Snyk required the longest scan time at 22:07 exceeding all other scanners on the same target.

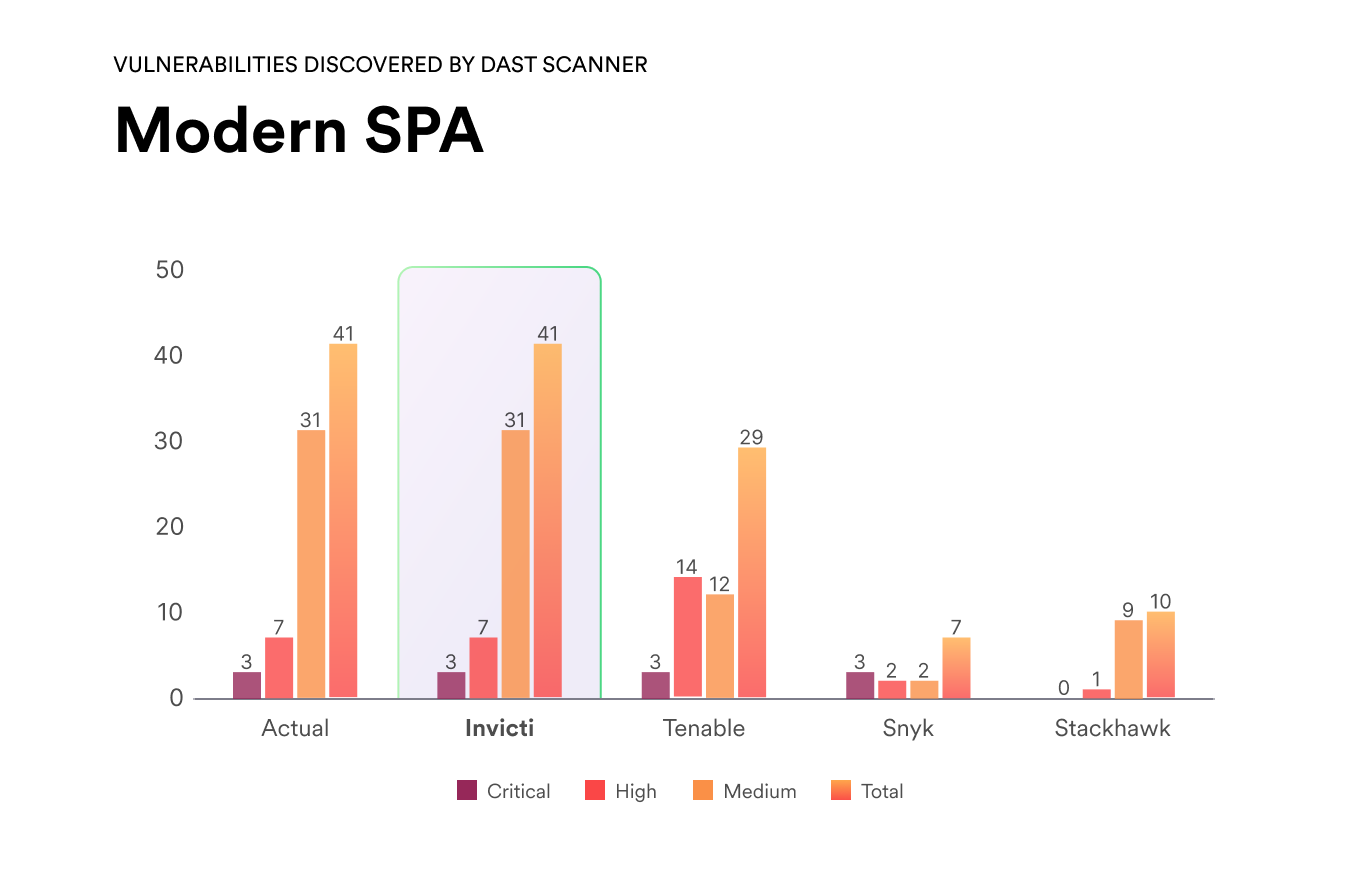

Invicti reported a total of 41 validated findings, including 3 critical, 7 high, and 31 medium severity vulnerabilities, representing the most comprehensive coverage across all severity tiers. Tenable reported 29 findings in lower overall coverage compared to Invicti despite identifying the same number of critical vulnerabilities. StackHawk reported 10 total findings, while Snyk reported 7 findings, including 3 critical, 2 high, and 2 medium severity vulnerabilities.

StackHawk completed scanning in 01:02, the shortest observed time. Invicti completed scanning in 12:01, balancing scan duration with breadth of findings. Snyk required 25:00 to complete scanning, while Tenable required the longest scan time at 32:00, exceeding all other scanners.

Industry-wide recognition